In science, negative results, all too often, never see the light of day. Indeed, that is quite a problem because biasing results towards positive findings means missing out on a potential information source that could have otherwise been obtained through the negative findings. Such a case is often the result of a drive to squeeze out statistical significance out of data. But, significance testing should, in no way, be the criterion upon which results are deemed important or not. Indeed, depending on significance introduces publication bias, where studies that do not meet expectations are just thrown away.

The gravity of such a practice can be most extreme in situations, where in fact, the null hypothesis of interest is actually the case. 5 % of studies that would show a false positive (statistical significance by chance) would then be selected whereas the other 95 % that fail to reject the null hypothesis would fade away from recognition in researchers’ file-drawers (Scargle, 1999). In fact, meta-analyses can then over-represent positive results to misleadingly conclude even stronger effects.

Perhaps, the danger of over-emphasizing significant results can be demonstrated by the supposed link between the MMR vaccine and autism. Andrew Wakefield first proposed such a link (Wakefield et al. 1998). The study had shown a positive connection while having a poor experimental conduct. Selection bias was most evident in the fact that the study selected children whose parents were concerned about the MMR vaccine and who were already seeking damages from pharmaceutical companies. Data from controls was not even provided and the sample size was quite small to construct any credible inference (12 children). Ever since, evidence from carefully controlled studies all over the world has continually failed to replicate Wakefield’s findings or show any correlation. In Finland, the National Public Health Institute examined 3 million children who had received the vaccine between 1982 and 1996 and found no connection. However, the public perception was unfortunately heavily weighed against the vaccine and Wakefield’s flawed findings were heavily reported and emphasized in tabloids and journals alike. Vaccination rates fell deeply – even below the threshold needed to sustain herd immunity. Incidences of measles, mumps, and rubella, which were all eradicated in many parts of the developed world, began to resurface and reestablish themselves.

Studies reporting effective treatments are more likely to be published than studies that do not report any effectiveness (Smith, 2006). But crucially, the direction of research should not be dictated by such motivations of significance scouring and data scooping. It is equally important to recognise the role of negative results in advancing science as well. All data should be reported and information gleaned over from negative results can, in fact, direct researchers to avoid repeating the same mistakes or the same redundant experiments, saving them the effort. They can show what doesn’t work.

Crucially, unpublished results can also give us an indication of the extent of any publication bias, if indeed it does exist. The effect sizes of published and unpublished studies can be compared and any difference would therefore point to a certain publication bias. Furthermore, unpublished results can also allow us to compare sample sizes and effect sizes for any correlation. Absence of any correlation would fail to indicate publication bias (effect sizes are the same for small and large studies).

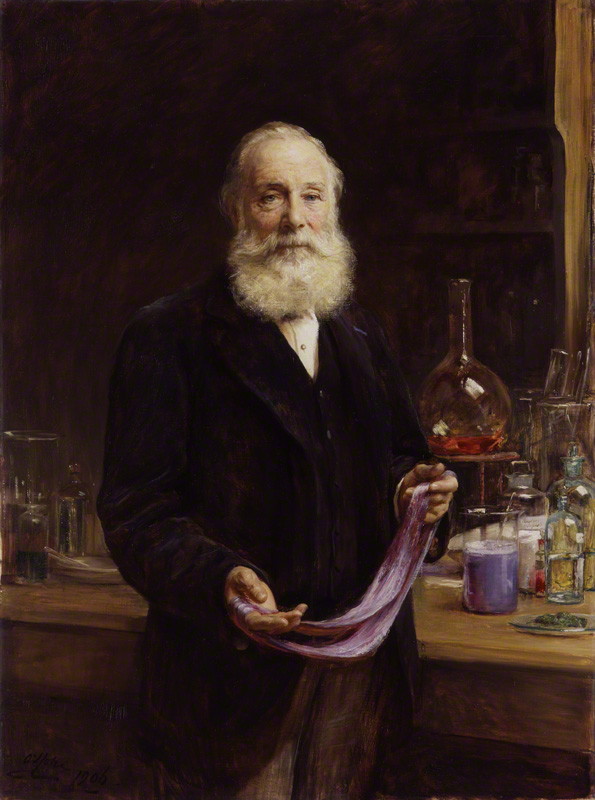

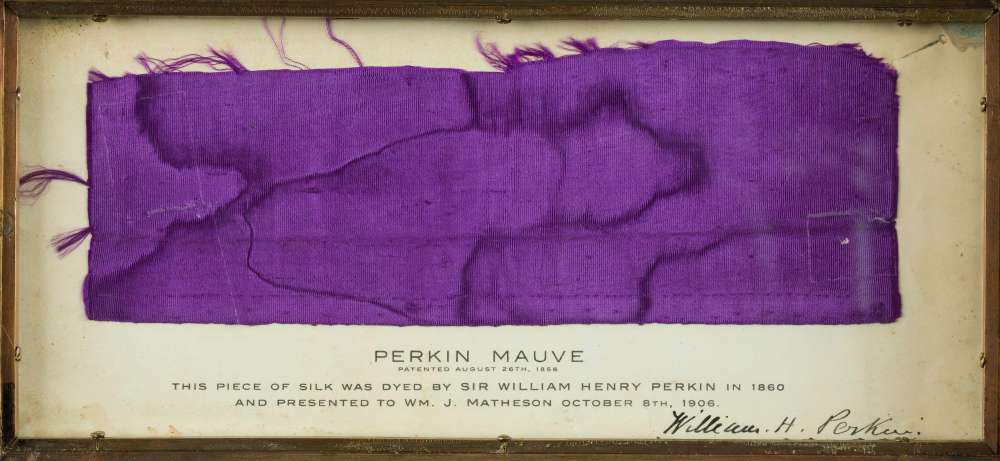

The story of Sir William Perkin is perhaps a great demonstration of the power of negative findings. In 1856, the then-17 year-old William Perkin had set out to synthesize artificial quinine, an alkaloid derived from cinchona bark. Quinine was first introduced to Europe in the 17th century by Spanish missionaries returning from South America who recognised its medicinal properties. Its use quickly became widespread for antimalarial treatment. But, it was difficult and expensive to extract it because the cinchona tree grew only at certain locations and elevations in South America and the East Indies. A more cost-effective source of quinine was in demand. In an attempt to synthesize it, Perkin used coal tar as a starting raw material. He isolated a coal tar derivative (aniline) and oxidised it in a mixture of potassium dichromate and sulfuric acid. As a result, a black sticky sludge precipitated in his test tube. Clearly, Perkin had failed. But little did Perkin know the significance of what he had just done. As he was washing away his test tubes, Perkin noticed the intriguing purplish residue left behind. Treating the precipitate with ethyl alcohol, Perkin noticed it created a purple solution that so vividly transferred a magnificent violet dye into the cloth he was using. Perkin realised he had created the first synthetic dye, which he called mauvine. Quite amazingly, it kept its colour and persisted even when exposed to light. Recognising the huge financial rewards that could come out of this, Perkin thought about marketing his discovery and obtained a patent. By the next year, he had set up a plant for the industrial production of aniline dyes. Perkin’s failure was not a failure after all.

Perkin’s accidental discovery revolutionized industrial and organic chemistry and spurred attempts to better understand the structure of organic molecules. Perkin had unwittingly invented the first process for manufacturing dyes through chemical synthesis. Britain’s coal-tar industry became the the largest market for dyes. Major advances in perfumery later followed and chemists were fueled by the very intriguing potential of extracting substances out of coal tar. Discoveries followed anew. Synthetic fibres, fertilizers, drugs, and plastics ensued. In a 1862 exhibition in London, Queen Victoria appeared with a silk gown dyed with Perkin’s mauvine. The colour had become so popular that the decade itself was named the Mauve Decade.

But, Perkin always wanted to give back to science. So, after having made some money from his business, he retired from his industry and devoted his later life for fundamental research in physics and science. Today, the “Perkin reaction” is well known in organic chemistry and is used to make cinnamic acids, which can be converted to a number of other chemical compounds.

Had Perkin just disregarded his negative findings, then surely that would not have happened. It is clear, then, that journals ought to publish negative results because all knowledge is useful and the practical potential in any finding might not be immediately evident.

Bibliography

Scargle J. D. 1999. Publication bias (The “File-Drawer Problem”) in scientific inference. Journal of Scientific Exploration 14(1): 91–106.

Smith, R. 2006. The Trouble with Medical Journals. Journal of the Royal Society of Medicine 99(3): 115–119.

Wakefield A. J., Murch S. H., Anthony A., et al. (1998). Ileallymphoid-nodular hyperplasia, non-specific colitis, and pervasive developmental disorder in children. Lancet 351: 637–641 (Retracted in 2010).

Featured image source: National Museum of American History.